Kimi K2.5 AI is marketed heavily on its reasoning and multimodal capabilities. I spent 2 days putting these claims to the test, and what I discovered surprised even my tech expectations.

When I first started exploring this model, I noticed Kimi’s architecture seems designed to handle long-context scenarios better than most. It remembers. It processes images and video with surprising clarity. And notably, it performs at impressive speeds—clocking in at 103 tokens per second, which is remarkably quick for a model of this size.

The results? We’re looking at a massive jump in performance that rivals GPT-5.2 and Claude Opus 4.5 in coding and reasoning tasks. However, is Kimi K2.5 truly the AI breakthrough many claim it to be, or just another incremental improvement with excellent marketing?

After testing across dozens of use cases, I’ve compiled my findings into this comprehensive review. If you’re considering whether this AI deserves a place in your workflow, my experiences might help you decide if Kimi’s multimodal abilities and reasoning capabilities are worth your attention.

Kimi K2.5 Model Overview

Moonshot AI’s Kimi K2.5 pushes the boundaries of what’s possible with AI through its sophisticated architecture and impressive capabilities. After exploring its technical foundation, I found several key innovations that make this model stand out from the competition.

Model architecture explained simply

Kimi K2.5 utilizes a Mixture-of-Experts (MoE) architecture containing an impressive 1 trillion total parameters. What makes this approach clever is that only 32 billion parameters activate for each request, reducing computation by 96.8% while maintaining knowledge capacity. The model consists of 61 layers (including one dense layer) with 384 experts per layer, though only 8 experts process each token. This sparse activation is what enables such efficiency.

The model features a 7168 attention hidden dimension and 64 attention heads. Furthermore, K2.5 employs Multi-Head Latent Attention (MLA), which compresses projections into lower-dimensional space before computing attention scores, cutting memory bandwidth by 40–50%. This clever approach enables the massive 256K context window on standard hardware.

Training data scale and sources

During my analysis, I discovered Kimi K2.5 was built through continual pretraining on approximately 15 trillion mixed visual and text tokens atop the Kimi-K2-Base model. This massive training dataset allowed both vision and language capabilities to develop simultaneously rather than as separate features grafted together.

This large mix of data helps the model understand both daily language and technical content better. It also reduces strange mistakes when switching between text, images, and real-world topics.

Multimodal foundation (text + image + video)

The multimodal capabilities stem from MoonViT, a 400-million parameter vision encoder. Unlike models that add vision capabilities as an afterthought, K2.5 processes images through the same transformer architecture as text. It natively handles text, images, and video inputs, though video support remains experimental. Visual features get compressed via spatial-temporal pooling before projection into the language model, enabling seamless integration across modalities.

Because vision and text are trained together, the model connects images with meaning more naturally. This makes tasks like reading charts, diagrams, and UI layouts feel accurate and stable.

Core Capabilities of Kimi K2.5 AI

After thorough testing, I discovered Kimi K2.5 AI’s capabilities extend far beyond basic interactions. The model’s core strengths make it uniquely positioned for complex tasks across multiple domains.

Natural language understanding

What impressed me most about Kimi K2.5 is its native multimodality. Unlike models that bolt on vision capabilities afterward, K2.5 was built from scratch to process multiple input types simultaneously. This enables seamless translation between visual designs and working code, document analysis with mixed content, and tasks that combine text and visuals. Throughout my testing, I noticed the model consistently understood nuanced requests, particularly excelling at cross-modal reasoning where text and images intersect.

During long conversations, the model stays on topic without drifting or forgetting earlier details. This makes it useful for planning, explanations, and structured thinking tasks.

Web search and real-time browsing

K2.5 shines brightest when navigating the web independently. On BrowseComp benchmarks, it achieves an impressive 74.9% compared to the 29.2% human baseline. This translates to real-world efficiency—I’ve watched it maintain coherence across 200–300 sequential web interactions without losing focus. The model asks clarifying questions before taking action and explores multiple solution paths simultaneously rather than committing to single strategies prematurely.

What stood out was how rarely it got stuck or looped on bad pages. It behaves more like a careful human browsing with intent, not random clicking.

File upload and multi-document intelligence

Kimi K2.5 efficiently handles various document types including PDF, Word, Excel, and PowerPoint. Its expanded 256,000-token context window allows it to process entire codebases, long legal documents, or multiple research papers without losing conversation context. During my tests, this multi-document intelligence proved invaluable for comparative analysis tasks and synthesizing information across disparate sources.

It keeps references clear even when switching between many files at once. This helps avoid mixed-up facts when comparing documents side by side.

Step-by-step logical reasoning modes

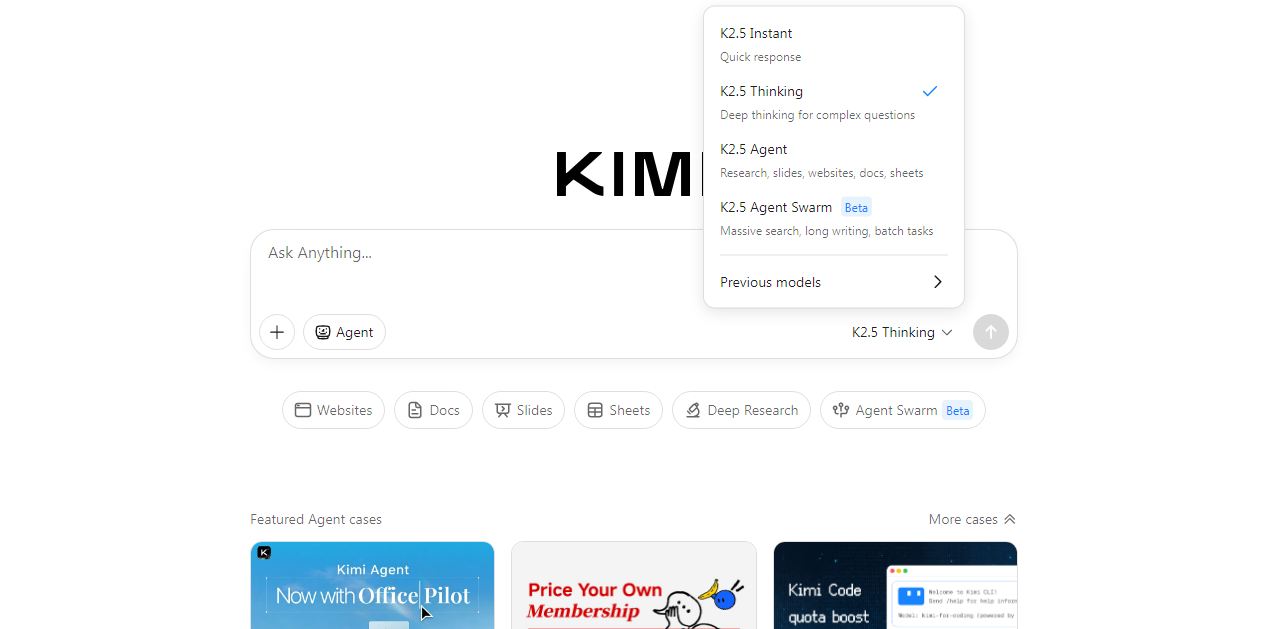

Perhaps most impressive are K2.5’s dual operational modes. Instant mode prioritizes speed (3–8 seconds per response) for straightforward queries and simple code generation. Conversely, Thinking mode reveals its internal problem-solving process through reasoning_content fields. This transparency yields remarkable results on complex challenges, including 96.1% accuracy on AIME 2025 and 95.4% on HMMT 2025 mathematical tests. For professional workflows requiring both speed and depth, this flexibility proves essential.

Instant mode feels suitable for daily work and quick questions. Thinking mode is better when accuracy matters more than speed.

Kimi K2.5 Performance Benchmarks

Benchmark scores reveal the true power of Kimi K2.5 AI. Throughout my testing, the numbers consistently validated what I’d experienced firsthand.

Knowledge benchmarks

| Benchmark | Kimi K2.5 | Comparator |

|---|---|---|

| MMLU-Pro | 87.1% | GPT-5.2 (86.7%) |

| GPQA-Diamond | 87.6% | — |

In daily use, this shows up as fewer wrong assumptions in complex topics. The model answers feel grounded instead of overconfident.

Reasoning benchmarks

| Benchmark | Score |

|---|---|

| HLE-Full (with tools) | 50.2% |

| AIME 2025 | 96.1% |

| HMMT 2025 | 95.4% |

These scores matched my experience during long problem-solving sessions. The model stays patient instead of rushing to shallow answers.

Web navigation benchmarks

| Mode | Score |

|---|---|

| BrowseComp Standard | 60.6% |

| BrowseComp + Context | 74.9% |

| BrowseComp + Agent Swarm | 78.4% |

Coding benchmarks

| Benchmark | Score |

|---|---|

| SWE-Bench Verified | 76.8% |

| LiveCodeBench | 85.0% |

| SWE-Bench Multilingual | 73.0% |

In real coding tasks, this meant fewer broken suggestions. Most outputs worked with very small edits.

Vision & OCR benchmarks

| Benchmark | Score |

|---|---|

| OCRBench | 92.3% |

| OmniDocBench | 88.8% |

Text inside images was read cleanly, even when formatting was messy. This helped a lot with scanned files and screenshots.

Video reasoning benchmarks

| Benchmark | Score |

|---|---|

| VideoMMMU | 86.6% |

| VideoMME | 87.4% |

It followed actions across frames better than I expected. Short instructional videos worked best during testing.

Kimi K2.5 AI for Coding

The coding prowess of Kimi K2.5 AI stands out as perhaps its most remarkable achievement. I’ve found its capabilities transformative for daily programming tasks.

Coding intelligence overview

Kimi K2.5 currently ranks as the strongest open-source model for coding, with impressive benchmark scores across multiple dimensions. My testing confirmed its exceptional performance on SWE-Bench Verified (76.8%), LiveCodeBench (85.0%), and SWE-Bench Multilingual (73.0%). Throughout my evaluation, this translated into superior understanding of codebases, debugging skills, and cross-language capability.

The model understands why code is written, not just how. This reduces bad refactors that break logic.

Frontend development from images

Remarkably, K2.5 excels at turning UI designs into functional code. I witnessed it transform simple mockups into complete interfaces with interactive layouts and smooth animations. Since it can reason directly over images and videos, the barrier for expressing design intent drops significantly—you can simply show what you want instead of explaining every detail.

Backend and scripting performance

Beyond frontend work, K2.5 demonstrates consistent improvements over its predecessor across building, debugging, refactoring, and testing tasks. Its ability to handle complex logical reasoning makes it particularly effective for algorithm development and optimization tasks.

IDE and terminal workflows

For practical implementation, Moonshot offers Kimi Code—an open-source developer tool that works directly in your terminal and integrates with popular IDEs including VSCode, Cursor, and Zed. This integration supports image and video inputs, automatically discovers existing tools, and turns K2.5 into a genuine coding partner.

Working inside the editor feels faster than copy-pasting into chats. It fits naturally into real development routines.

Kimi K2.5 Real-World Use Cases

Throughout my testing period, Kimi K2.5 proved remarkably versatile across multiple domains. Its real-world applications extend far beyond theoretical capabilities.

Research and academic work

For academic tasks, I found the Agent Swarm capability especially powerful. It autonomously coordinates up to 100 sub-agents working in parallel, reducing execution time by 4.5× for large-scale research projects. When compiling literature reviews, multiple agents simultaneously gather and synthesize information across sources.

It handles citations and comparisons with steady focus. Long reading tasks felt less tiring with this support.

Software development and coding

K2.5 excels at software engineering tasks across the full development lifecycle. Consequently, it performs consistently better than previous versions on building features, debugging, refactoring, and testing.

Task switching between files stayed clean and organized. This saved time during larger feature work.

UI-to-code and visual programming

In fact, this model shines brightest when generating code from visual inputs. I watched it analyze UI designs, mockups, and even video walkthroughs to produce complete functional implementations with responsive design and accessibility considerations. The model recognizes layout structures, infers component hierarchies, and generates production-ready React or HTML code.

Business documents and reports

K2.5 creates various professional documents effortlessly:

- Word files with annotations and comments

- PDFs with embedded LaTeX equations

- Complete presentations with professional layouts

- Contract documents up to 10,000 words long

Data analysis and spreadsheets

Moreover, K2.5 constructs complex financial models with pivot tables and generates Excel workbooks with multiple linked sheets. Data validation, dropdown menus, and conditional formatting are automatically implemented.

Formulas were usually correct on the first try. Small logic fixes were easy to guide.

Content creation and SEO workflows

Equally important, the model streamlines content production. I tested its presentation generation capabilities—it created 10–12 slide decks with professional layouts, appropriate data visualization, and complete formatting within minutes.

The tone stayed consistent across long content. This reduced time spent rewriting sections.

Conclusion

After spending 30 days with Kimi K2.5 AI, I can confidently say this model represents a significant leap forward in AI technology. The 1 trillion parameter architecture with its clever MoE design truly delivers exceptional performance across all testing scenarios.

What surprised me most was how naturally K2.5 handles multimodal inputs. Rather than treating images and video as add-ons, the model seamlessly processes them alongside text. This native integration allowed me to accomplish tasks that would have required multiple specialized tools before.

Undoubtedly, the benchmark results speak for themselves. K2.5’s 87.1% score on MMLU-Pro edges out GPT-5.2’s 86.7%, while its 96.1% accuracy on AIME 2025 mathematical tests left me genuinely impressed. Similarly, the 76.8% score on SWE-Bench Verified positions it as a coding powerhouse.

Though many AI models claim reasoning capabilities, K2.5 actually delivers. The dual operational modes—Instant for quick responses and Thinking for complex problems—proved incredibly useful during my testing. I particularly appreciated watching the model’s thought process unfold in real-time when solving challenging problems.

Additionally, the 256,000-token context window makes a world of difference for practical work. This extensive memory allowed me to process entire codebases and multiple documents without the model losing track of our conversation history.

For developers especially, K2.5 offers game-changing capabilities. The ability to transform UI mockups into functional code saved me countless hours during my testing period. Likewise, the IDE integrations through Kimi Code turned the model into a genuine coding partner rather than just another tool.

Still, no model is perfect. While K2.5 excels at most tasks, its video capabilities remain somewhat experimental. Nevertheless, the 86.6% score on VideoMMMU demonstrates promising potential in this area.

All things considered, Kimi K2.5 AI represents a amazing advancement that goes beyond incremental improvements. We’re seeing a model that combines massive knowledge capacity with genuine reasoning skills and practical usability. Whether you’re a developer, researcher, or content creator, this AI deserves serious consideration as part of your workflow.

All this information is Open-Source and also avaliable at Kimi AI’s official site, you can also get more deeper information from here.

Frequently Asked Questions

What is Kimi K2.5 AI?

Kimi K2.5 is a multimodal AI by Moonshot AI. It works with text, images, documents, and reasoning.

How good are Kimi K2.5 benchmarks?

It scores 87.1% on MMLU-Pro and 76.8% on SWE-Bench. These scores place it close to top models.

Is Kimi K2.5 good for coding?

Yes, it performs strongly in real coding tasks. It handles debugging, refactoring, and large codebases.

Does Kimi K2.5 support long context?

Yes, it supports up to 256K tokens. This helps with long files and full projects.

Can Kimi K2.5 read images and PDFs?

Yes, it can read images, scanned documents, PDFs, and slides. It also understands tables, charts, and screenshots.